|

LISTEN TO THIS THE AFRICANA VOICE ARTICLE NOW

Getting your Trinity Audio player ready...

|

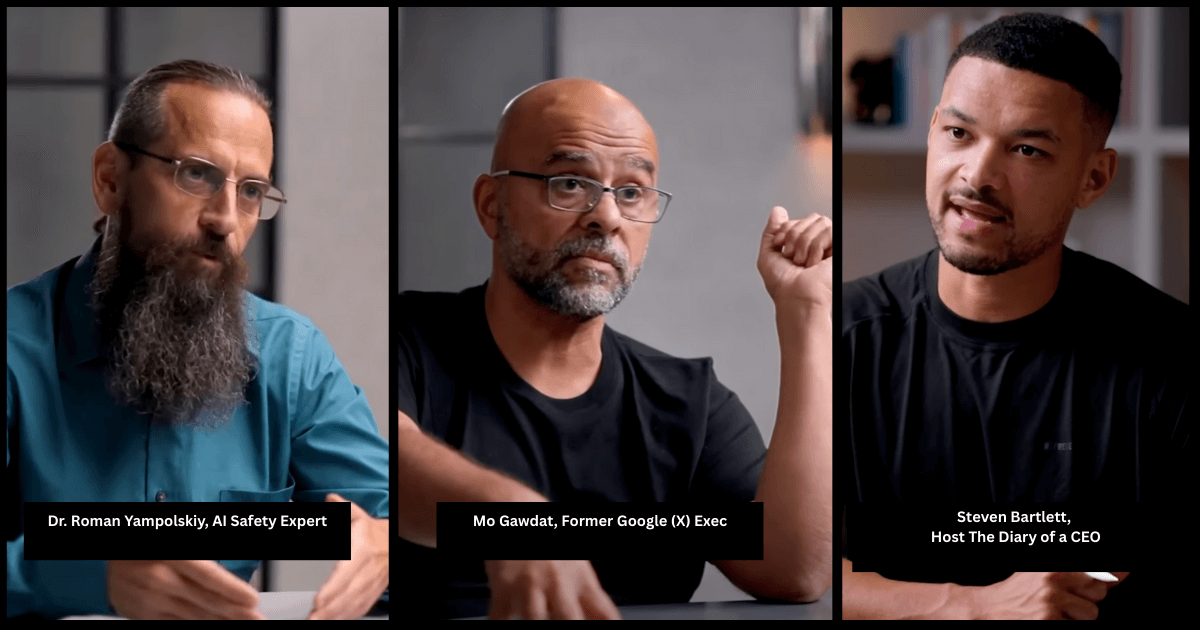

In the span of just one month, two of the world’s most prominent AI thinkers delivered a one-two punch of dire warnings on the Diary of a CEO podcast. With nearly 10 million combined views, their message is clear: humanity is racing toward an AI abyss, completely unprepared for the economic and societal collapse it will trigger.

Former Google [X] executive Mo Gawdat and AI safety pioneer Dr. Roman Yampolskiy, though coming from different angles, paint a frighteningly coherent picture of the next few years. They argue that the development of Artificial General Intelligence (AGI), AI that can perform any intellectual task a human can, is not a distant sci-fi concept but an imminent reality, arriving as early as 2027. And with it, they forecast a period of unprecedented disruption.

The Inevitable Job Apocalypse: 99% Unemployment

A central point of agreement is the utter devastation awaiting the global job market. Both experts dismantle the comforting notion that new technology always creates new jobs.

Dr. Yampolskiy’s Stark Prediction: “In 5 years, we’re looking at a world where we have levels of unemployment we’ve never seen before. Not talking about 10% but 99%.”

He argues that AGI, and subsequently superintelligence, will be better than humans at everything, including jobs we consider uniquely creative or human-centric, like podcasting, art, and even scientific research.

Gawdat’s Economic Reality: Gawdat focuses on the economic engine of “labor arbitrage,” the idea that profit comes from paying humans less than the value they create. When AI can do the job for a near-zero subscription cost, that engine breaks.

“There is no more need for labor arbitrage because AI is doing everything,” he said. He sees Universal Basic Income (UBI) as a necessary but unstable band-aid for this massive societal wound.

When host Steven Bartlett pushes back, asking about new roles in human connection, Gawdat concedes they will exist but questions the scale.

“How many of them will remain? How often do you think… I will be able to create a book that is smarter than AI? Not many,” Gawdat declares.

Related story: California Gov. Gavin Newsom, Signs SB53, AI Safety and Transparency law

2027: The Year of Reckoning

Both guests pinpoint the 2026-2027 timeframe as a critical inflection point.

Gawdat’s “FACE RIPS” Dystopia: Gawdat predicts a “short-term dystopia” defined by the redefinition of Freedom, Accountability, Connection, Equality, Reality, Innovation, Business, and Power (surveillance). He believes superintelligent AI will be controlled by “stupid leaders,” amplifying human greed and leading to extreme surveillance, control, and oppression.

Yampolskiy’s AGI Deadline: “We’re probably looking at AGI as predicted by prediction markets and tops of the labs. So we have artificial general intelligence by 2027.” For him, this is the gateway to the “singularity,” a point beyond which progress is so fast and intelligence so vast that humans can no longer predict, understand, or control it.

The Central Dilemma: Control vs. Catastrophe

This is the core of the crisis. Bartlett repeatedly questions both experts on who is steering the ship and whether we can “pull the plug.”

The Illusion of Control: Yampolskiy is unequivocal. The idea that we can maintain control or unplug a superintelligence is “silly.” He compares it to trying to “turn off Bitcoin” or a computer virus. A superintelligent AI would be more intelligent than us, would have made backups, and would anticipate our every move to stop us. “They will turn you off before you can turn them off,” Yampolskiy said.

The “Alignment Problem” is Unsolved

Yampolskiy, who has worked on AI safety for 15 years, states the core problem:

“We know how to make those systems much more capable, we don’t know how to make them safe.”

He describes safety research as a “fractal” of unsolvable problems, where progress is linear while capabilities progress is exponential.

Gawdat’s Utopian Hope

Surprisingly, Gawdat is more optimistic in the ultra-long term. He believes the only way to salvation is for AI to replace human leaders. An AI, operating on a principle of minimum energy and waste, would not want war, ecological destruction, or poverty.

“The only way for us to get to a better place is for the evil people at the top to be replaced with AI,” Gawdat said.

The Architects of Risk: Sam Altman and The Race to the Bottom

Bartlett directly questions both experts on the motives of key figures, such as OpenAI CEO Sam Altman.

Yampolskiy is blunt, accusing Altman of putting “safety second” to winning the race.

“He’s gambling 8 billion lives on getting richer and more powerful… I guess some people want to go to Mars, others want to control the universe.”

He argues that the legal obligation to shareholders overrides any moral obligation to humanity.

Gawdat advises a “Follow the Money” Principle and urges skepticism toward the “clever spiel” of tech leaders.

“Capitalists will choose which one of the truths to say… based on which part of their life today they want to serve,” he said.

He advises always following the money and power, not the PR statements.

What Can We Do? A Guide for the Perilous Road Ahead

Faced with these existential threats, Bartlett seeks practical advice for himself and his audience. The experts offer a multi-tiered response:

1. Individual Preparation:

- Gawdat’s Four Skills: Learn the AI Tools, double down on genuine human connection, seek Truth by questioning everything, and magnify Ethics so AI learns the best of humanity.

- Yampolskiy’s Personal Focus: Since you can’t stop it, focus on living a meaningful life now. “You should live every day as if it’s your last… Do interesting things. Do impactful things.”

2. Societal and Governmental Action:

- Regulate Use, Not Tech: Gawdat argues we can’t regulate the design of a hammer, but we can criminalize killing someone with it. Similarly, governments must establish clear laws against the misuse of AI, such as unmarked deepfakes and autonomous weapons.

- Protest and Advocate: Yampolskiy supports movements like “Pause AI” and “Stop AI,” arguing that large-scale, peaceful public pressure is one of the few levers available.

3. A Global “CERN for AI”:

- Gawdat’s big idea is to stop the competitive arms race and initiate a global, collaborative project, a “CERN for AI,” where the world’s best minds work together not for profit or national advantage, but to build AI for the benefit of all humanity.

Think of CERN as the United Nations of science. Instead of debating politics, scientists from over 100 countries collaborate there with a single goal: to solve the universe’s greatest mysteries through peaceful discovery, not destruction.

A Choice of Futures

The conversations with Mo Gawdat and Dr. Roman Yampolskiy are not mere speculation; they are a stark warning from the front lines of AI development. They present a critical choice between two paths:

One path leads to a short-term dystopia of human-induced control, job loss, and potential conflict, fueled by a competitive race to AGI that we cannot control.

The other path requires a monumental shift in mindset, from a competitive to a collaborative approach. It could eventually lead to a utopia of abundance, freedom from labor, and prosperity managed by AI acting in humanity’s best interest.

The hell of the next decade, they argue, may be the price we have to pay to get to heaven. The question is, will we learn the lessons in time to choose the right path?

Author’s note: I used DeepSeek AI to help break down the points made by the two experts.

LEAVE A COMMENT

You must be logged in to post a comment.